The business case for a document AI platform practically writes itself. Processing times drop from days to minutes. Manual data entry disappears. Compliance bottlenecks loosen. Your operations team is ready to sign off before the vendor finishes the demo.

Then IT gets involved.

This is not a criticism. IT teams exist to protect the organization from exactly the kind of risks that come with plugging a new AI platform into your document workflows. Invoices, contracts, patient records, mortgage applications, and HR files flow through document processing systems every day. The wrong platform choice does not just slow things down. It creates breach exposure, compliance violations, and audit nightmares.

What makes document AI evaluations different from other software procurement is the data sensitivity involved. You are not connecting a project management tool or a design app. You are routing documents that contain personally identifiable information, financial data, proprietary contracts, and regulated health records through an AI system. Every question IT asks carries real weight.

The good news is that these questions are predictable. IT teams across industries tend to focus on the same six areas when evaluating document AI platforms. If you prepare solid answers before IT ever enters the room, the approval process moves significantly faster. If your vendor cannot answer them clearly, that tells you something important too.

Here are the six questions your IT team will ask, what they are really trying to find out, and what a strong answer looks like.

Why IT Scrutiny Has Increased Around AI Platforms

Before getting into the six questions, it helps to understand why document AI platforms face more scrutiny than many other software categories.

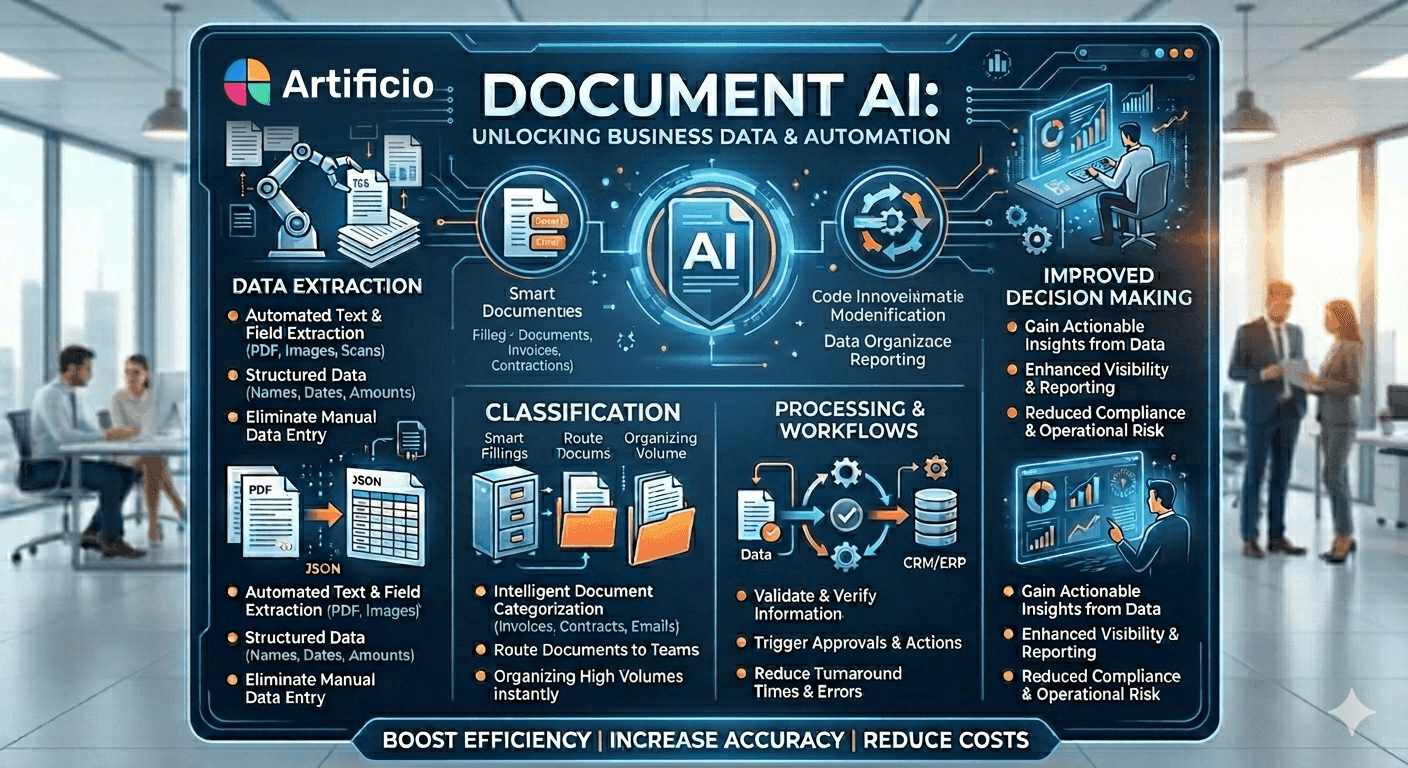

Traditional document management tools mostly store and retrieve files. Document AI platforms actively process content. They extract data fields, classify document types, apply business logic, and sometimes feed extracted data into downstream systems like ERPs, CRMs, or compliance databases. Each of those steps is a potential exposure point.

Regulators have noticed. GDPR, HIPAA, SOC 2, ISO 27001, and sector-specific frameworks like PCI DSS all have something to say about how AI systems handle sensitive data. IT teams in regulated industries know that a poorly evaluated vendor can create compliance obligations that last years after the initial contract ends.

That context explains why IT teams ask the questions they do. They are not trying to block progress. They are mapping risk.

Question 1: How Is Our Data Secured?

This is always first. And it covers more ground than it appears to.

IT teams want to understand encryption standards for data in transit and at rest, but they also want to know about data isolation. If your organization shares infrastructure with dozens of other companies using the same platform, what prevents a misconfiguration from exposing your documents to another tenant?

Strong platforms use AES-256 encryption at rest and TLS 1.2 or higher in transit. But the isolation question matters just as much. Multi-tenant architectures can be secure when implemented well, and dedicated infrastructure options remove that concern entirely.

Penetration testing and third-party security audits are also fair game here. IT teams want to see evidence of security posture, not just assurances. SOC 2 Type II certification has become a baseline expectation in most enterprise environments because it demonstrates that security controls have been independently audited over a sustained period.

Zero-trust architecture is increasingly common in enterprise environments, and document AI platforms need to fit into that model. This means the platform should support network segmentation, least-privilege access controls, and the ability to operate without requiring broad network permissions that conflict with existing security policies.

What to look for: SOC 2 Type II, ISO 27001, documented encryption standards, penetration test results on request, and a clear answer on multi-tenant vs. dedicated infrastructure.

Question 2: Where Does Our Data Live?

Data residency is not just a technical preference. For many organizations, it is a legal requirement.

GDPR mandates that EU personal data cannot be transferred to countries without adequate data protection standards unless specific safeguards are in place. HIPAA requires that covered entities understand where PHI is processed and stored. UK data protection law has similar transfer restrictions post-Brexit. For organizations operating across borders, data residency questions get complicated fast.

IT teams want to know: where are the servers? Which cloud regions are used? Can data be restricted to a specific country or region? What happens during disaster recovery failover? Does the backup data go to a different jurisdiction than the primary data?

On-premise deployment answers many of these questions cleanly. When the platform runs inside your own infrastructure, data never leaves your control. For organizations that handle highly sensitive data or operate in jurisdictions with strict localization requirements, on-premise is often the only acceptable architecture.

Cloud-based platforms that offer regional data residency controls (dedicated EU hosting, US-only storage, etc.) can satisfy many compliance requirements. But the documentation needs to be specific. "We are based in the US" is not the same as "your data is processed and stored exclusively in AWS eu-west-1."

What to look for: specific data center locations, regional deployment options, written confirmation of data residency commitments, and clarity on where backup and log data is stored.

Question 3: Does It Support Our Identity and Access Management?

Every enterprise has an identity stack. Whether it runs on Okta, Azure Active Directory, Google Workspace, or an on-premise Active Directory deployment, that system is how the organization controls who can access what.

A document AI platform that forces users to manage a separate set of credentials creates two problems. First, it is a security risk because standalone credentials often fall outside the organization's password policies, MFA requirements, and offboarding procedures. When an employee leaves, a forgotten standalone account becomes a persistent access vulnerability. Second, it creates an administrative burden. IT teams do not want to manage two identity systems.

SAML 2.0 is the gold standard for enterprise SSO integration. OpenID Connect (OIDC) is also widely supported and preferred in modern cloud environments. Either way, the platform should be able to delegate authentication to your existing identity provider, which means MFA enforcement, conditional access policies, and session management all inherit from your central stack.

Role-based access control within the platform matters separately. SSO handles authentication (who you are). RBAC handles authorization (what you are allowed to do). IT teams want to confirm that the platform supports granular role definitions that map to your organizational structure. A document processor should have different access than a compliance reviewer, a department manager, or a platform administrator.

What to look for: SAML 2.0 or OIDC support, integration with your specific identity provider, granular RBAC, and clean offboarding workflows that revoke access when users are deprovisioned in the central directory.

Question 4: How Does It Integrate with Our Existing Systems?

Document AI does not operate in isolation. Extracted data needs to flow into ERPs, CRMs, case management systems, and workflow tools. Input documents come from email servers, cloud storage, physical scanners, and customer-facing portals. Integration quality determines whether the platform accelerates your operations or creates new data silos.

IT teams evaluate APIs from several angles. Coverage comes first: can the API handle every operation the business team needs, or does it require workarounds? REST APIs with comprehensive endpoint documentation are the baseline. Webhook support matters for real-time event-driven workflows where downstream systems need to react immediately when a document is processed.

Stability and versioning are just as important as coverage. An API that changes without warning or that deprecates endpoints on short notice creates maintenance burden. IT teams want to see version-controlled APIs with clear deprecation policies and meaningful advance notice before breaking changes.

Authentication and rate limiting affect security and reliability. The platform should support API keys with appropriate scoping, OAuth where needed, and transparent rate limits that match the volume requirements of your use case.

For organizations running SAP, Salesforce, ServiceNow, or other enterprise platforms, pre-built connectors accelerate deployment considerably. But connectors built on top of well-documented REST APIs are more trustworthy than proprietary integration modules that create vendor lock-in.

What to look for: REST API with comprehensive documentation, webhook support, stable versioning with a clear deprecation policy, appropriate authentication options, and either native connectors or an open integration architecture.

Question 5: What Audit and Compliance Logging Does It Provide?

When something goes wrong, or when a regulator asks questions, your organization needs to know exactly what happened with every document that entered the system.

Audit logging in document AI platforms covers several distinct needs. Access logs show who viewed which documents and when. Processing logs show what the AI extracted from each document and what confidence levels were applied. Change logs show when records were modified, by whom, and what the previous values were. System logs show infrastructure events, error conditions, and configuration changes.

Regulatory audits are the obvious use case, but internal investigations and dispute resolution matter too. If a client disputes the terms extracted from a contract, or if an employee raises a concern about how their personal data was handled, audit logs provide the evidence trail.

Tamper resistance is a specific concern IT teams raise with AI-based systems. Logs need to be immutable, meaning that neither users nor administrators can alter or delete historical log entries. Platforms that store logs in write-once storage or that use cryptographic integrity checks provide stronger guarantees than those where log access is simply role-restricted.

Retention periods need to match your compliance requirements. GDPR right-to-erasure obligations and long-form financial record-keeping requirements can pull in opposite directions, so the platform needs to support configurable retention with the ability to honor deletion requests while preserving non-personal audit evidence.

What to look for: comprehensive logging across access, processing, and system events; immutable log storage; configurable retention periods; export capabilities for SIEM tools; and clear documentation of what each log type captures.

Question 6: What Are the SLA Terms and How Are Breaches Handled?

Service level agreements are sometimes treated as boilerplate, but IT teams read them carefully because they define accountability.

Uptime commitments matter most for organizations that process documents in time-sensitive workflows. A mortgage broker processing same-day pre-approvals has different availability needs than an insurance company batch-processing historical claims. The platform's SLA needs to match the operational reality of your use case.

The definition of "downtime" varies significantly between vendors. Some define it as complete unavailability, which means degraded performance does not count. Others specify more granular service tiers. IT teams want to understand what the vendor is actually promising, not just the headline percentage.

Support response times are equally important. A critical document processing failure at 2 AM on a Friday has different urgency than a formatting question. SLAs should specify different response time commitments by severity level, and those commitments need to be realistic enough to hold up under pressure.

Breach remedies are where many SLAs disappoint. A service credit that amounts to a small fraction of monthly fees is not meaningful compensation for a production outage that disrupted operations, triggered customer penalties, or created compliance exposure. IT teams want to see proportionate remedies and clear escalation paths.

For on-premise deployments, the SLA conversation shifts to support coverage. Response times for critical issues, software update frequency, security patch turnaround, and the vendor's commitment to long-term platform maintenance all become negotiating points.

What to look for: specific uptime percentages with a clear downtime definition, tiered support response times by severity, proportionate breach remedies, and clear escalation procedures.

Bringing IT Into the Process Earlier

One pattern that consistently slows down document AI procurement is bringing IT in at the final stage, after business teams have already selected a vendor and negotiated pricing. By that point, any IT concern that cannot be resolved means starting over, which creates pressure to approve a platform that might not meet standards.

Organizations that move fastest tend to involve IT in the vendor evaluation phase, not just the approval phase. This means sharing the IT checklist with potential vendors early, requiring responses to security questionnaires before demos rather than after, and including IT stakeholders in discovery calls where architecture questions come up naturally.

Vendors that have answered these questions hundreds of times will have documentation ready. Security white papers, penetration test summaries, SOC 2 reports, API documentation, and SLA templates should be available before IT ever formally reviews the platform. If a vendor struggles to produce this material, that is a signal worth paying attention to.

What Strong Answers Look Like

Platforms built for enterprise environments do not treat security, compliance, and integration as optional features. They build them into the architecture from the start, which means the documentation exists, the certifications are current, and the answers to these six questions are clear without lengthy internal escalations.

Artificio was designed for exactly this kind of scrutiny. The platform supports on-premise and cloud deployment with full data residency controls, SAML 2.0 and OIDC-based SSO integration, REST APIs with comprehensive documentation, immutable audit logging with configurable retention, and enterprise SLA terms with meaningful support commitments.

When your IT team asks these questions, the answers should come quickly and completely. That is how you know the platform was built for enterprise use, not retrofitted for it.

The six questions above are not obstacles. They are the right filter. Use them.